This is niche enough that I’m almost embarrassed to post it, but by adding this to the industry knowledge I may save someone else 15 minutes of frustration.

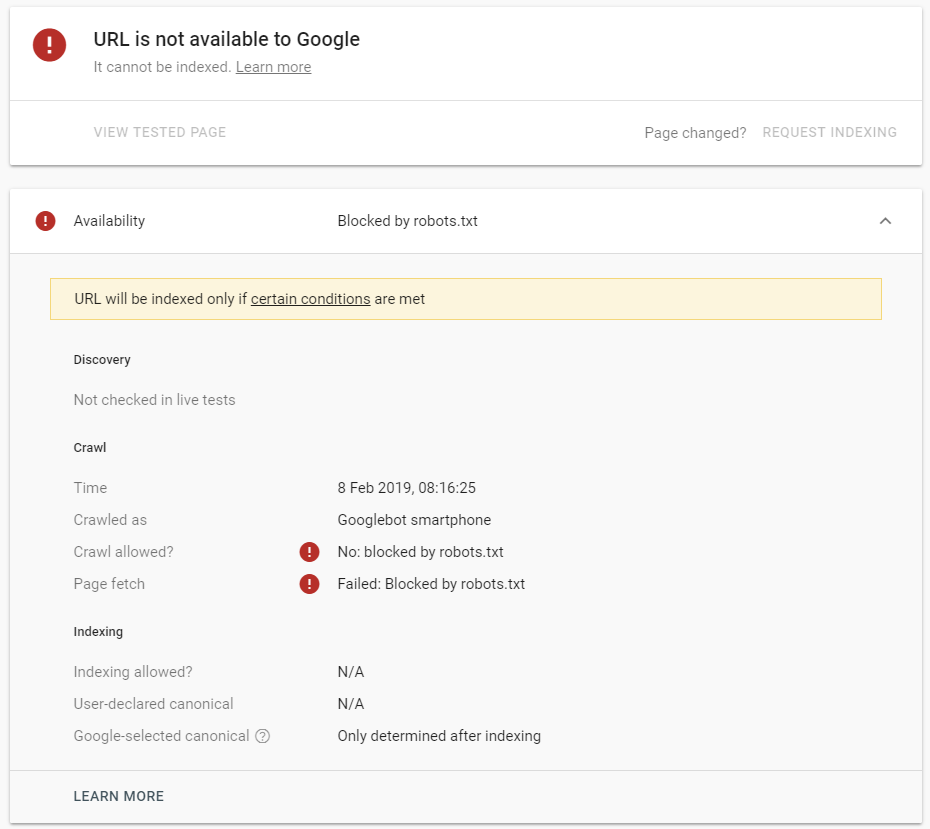

If you perform a fresh URL Inspection in Google Search Console for a live asset that isn’t a page, Google Search Console will claim it’s blocked in robots.txt.

If you want to see how an image is being interpreted right now, you can’t. Search Console will say it’s blocked in robots.txt and refuse to fetch it.

Try it yourself.

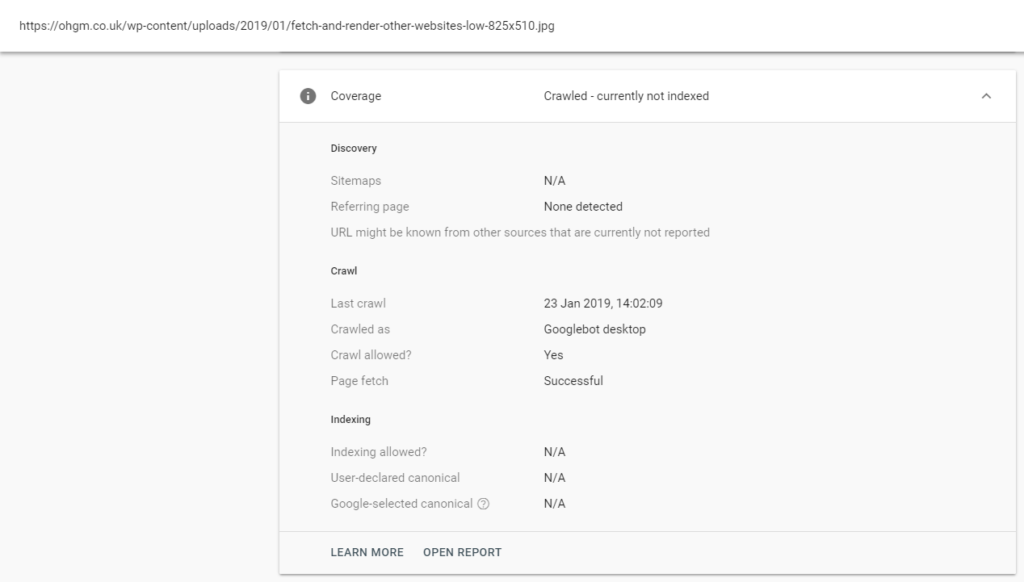

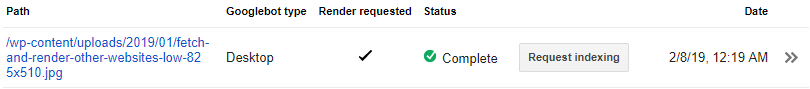

Request an image in URL inspect:

Now request the live URL:

Now get told it’s blocked in robots.txt:

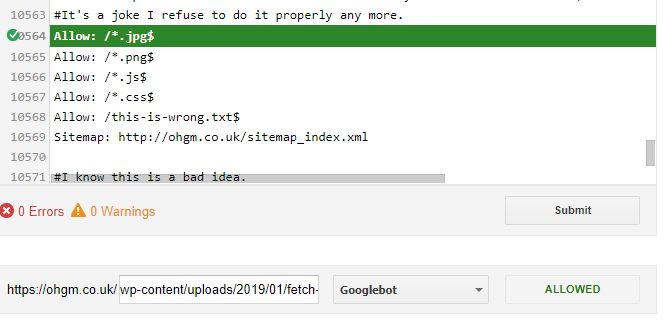

Use the link to test if it’s blocked in robots.txt to confirm it isn’t blocked in robots.txt:

Now waste far too long on trying to solve this.

Check your logs to confirm it’s being crawled (it is). Try and solve the cause and discover something else far more useful in the process.

Repeat on multiple websites to confirm it’s just how Search Console Works for most things that aren’t pages or redirects.

- If it’s a redirect, Google will assess the status and filetype of the destination.

- If it’s a 404 or another error, Google will abandon the page.

- There are some other spooky things here but I’m saving them for ohgmcon.

If you changed a supposedly “blocked in robots.txt” URL to a redirect, it becomes crawlable suggesting that the URL isn’t really blocked, and this message is a shorthand for a hardcoded “Oliver, please stop clogging Google Search Console with this shit.”:

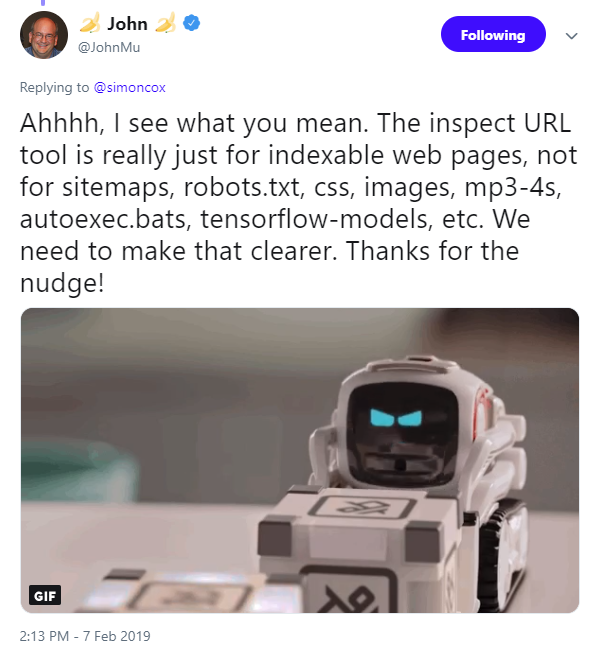

And this makes sense – this tool is about the indexing of documents, not stuff.

But sometimes this stuff makes it into Search Console Coverage reports.

Why Would I Care?

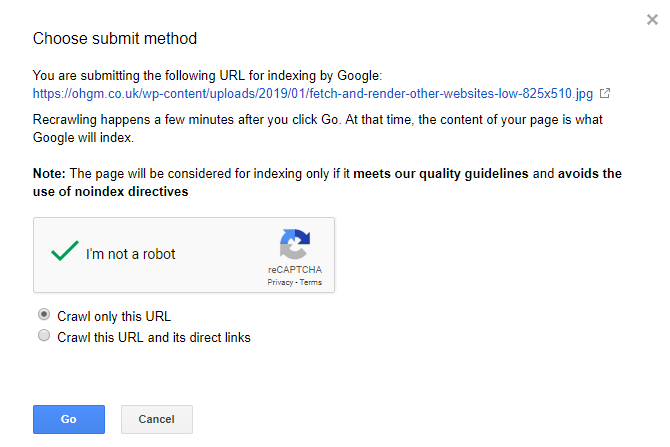

Previously you could submit whatever you wanted for indexing through Search Console’s Fetch & Render

You might miss this functionality, even if it was placebo.

Hopefully the error messaging here is updated soon to reflect reality.

I ran into this on an image a few days ago, was running around trying to diagnose a robots.txt issue that was obviously not there!

You just saved me 15 or more on …car insurance… I mean time. Thank you SEO thought leader.