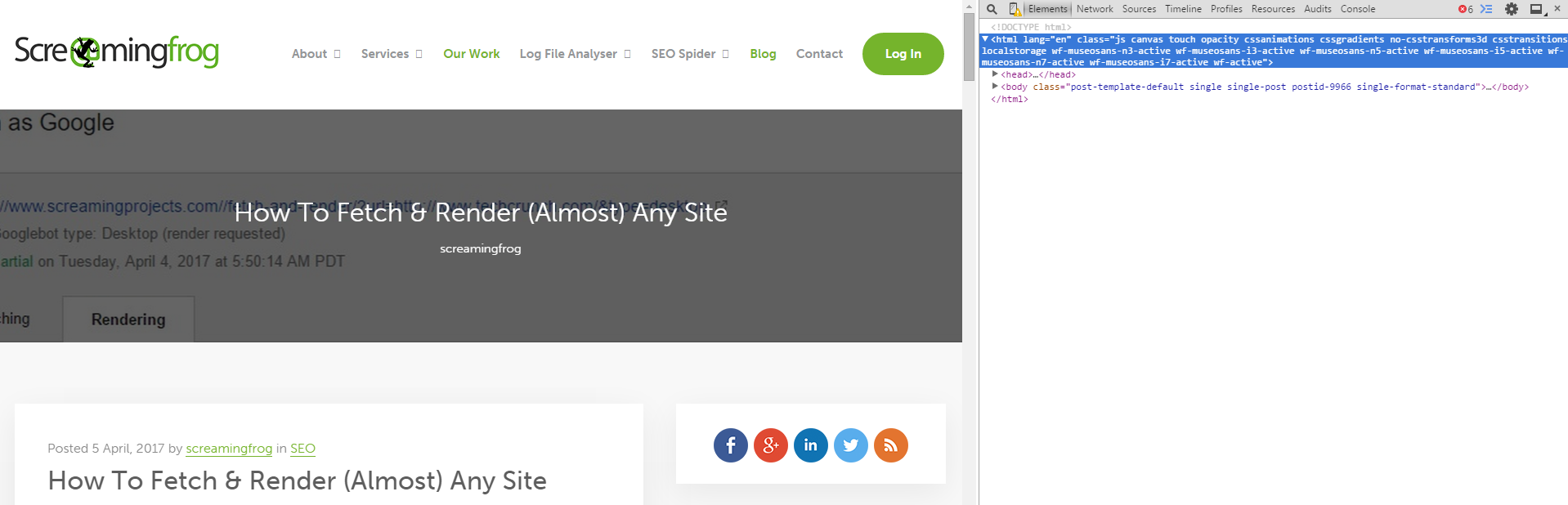

This post is the spiritual successor to How to Fetch and Render (almost) Any Site, a blog post I did not write but wish I had.

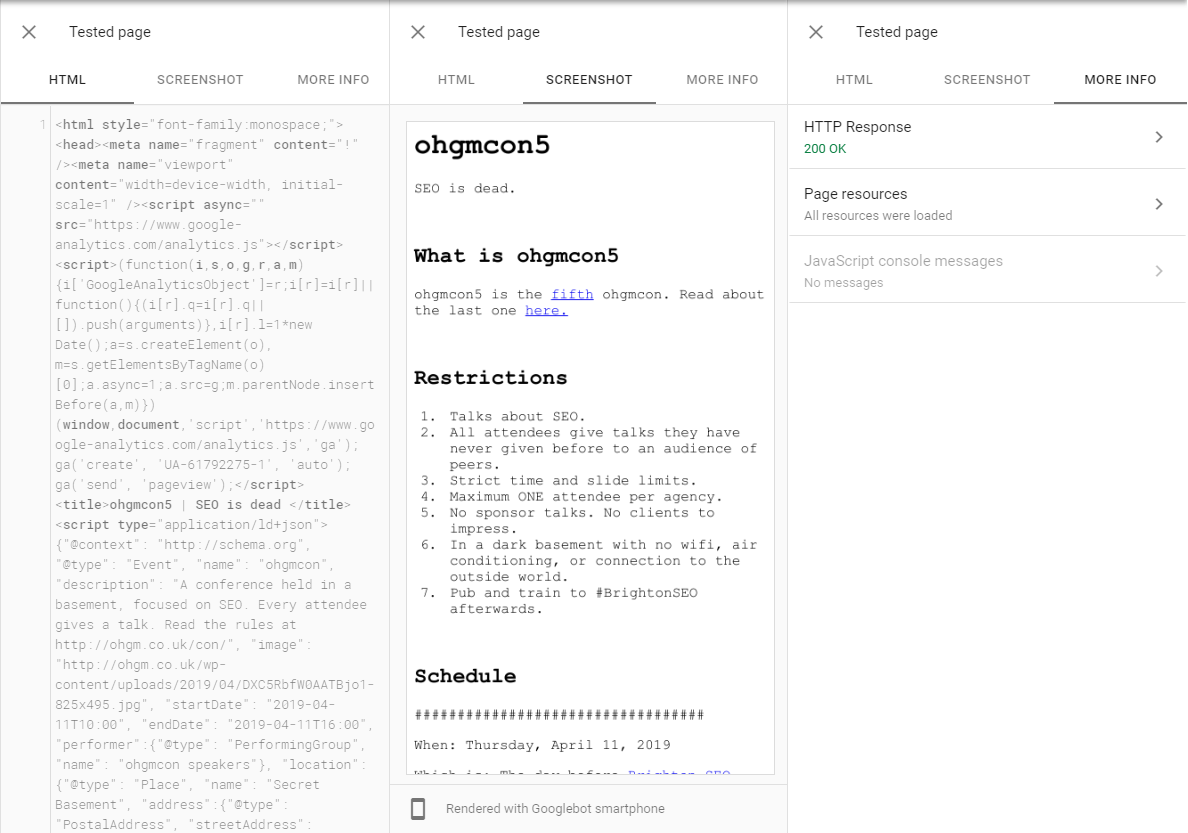

The new version of the URL Inspection tool is very useful for diagnosing issues for Googlebot’s rendering peculiarities:

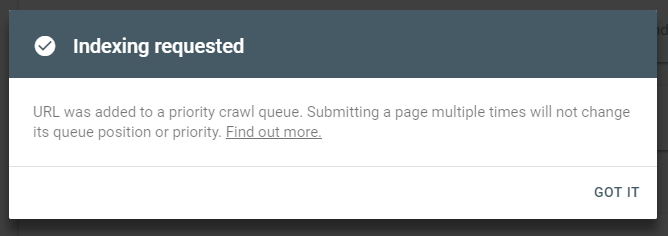

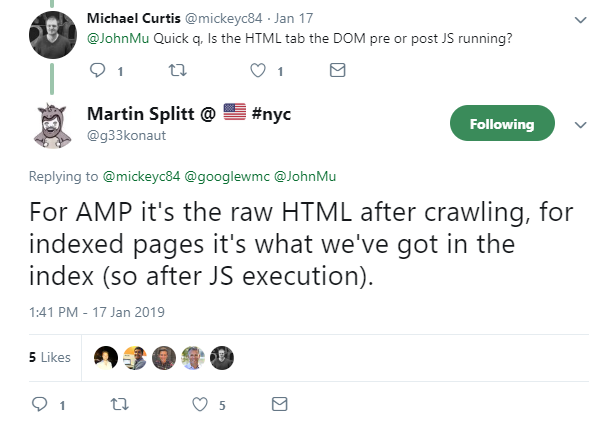

Crucially, it includes the result of JS execution:

However, if you don’t have access to a Search Console property, you cannot inspect the URL:

I’ll keep this brief:

You can bypass this restriction with cross domain redirects.

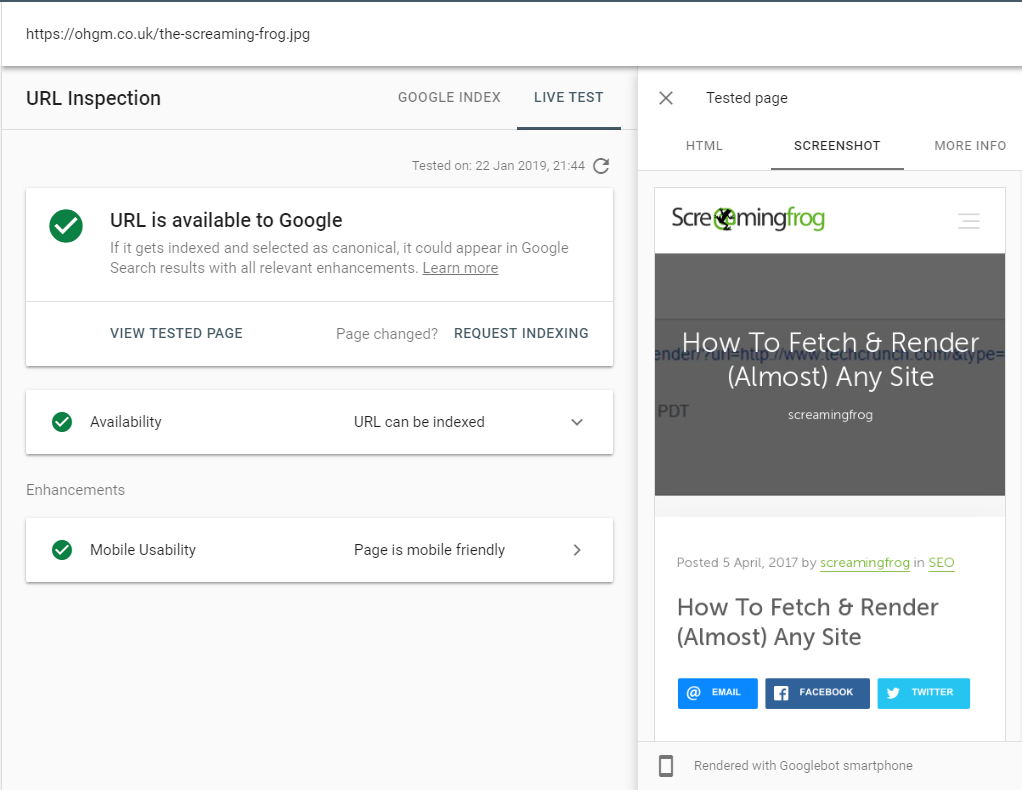

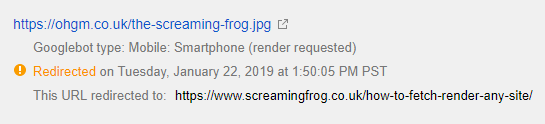

Paying homage to the progenitor:

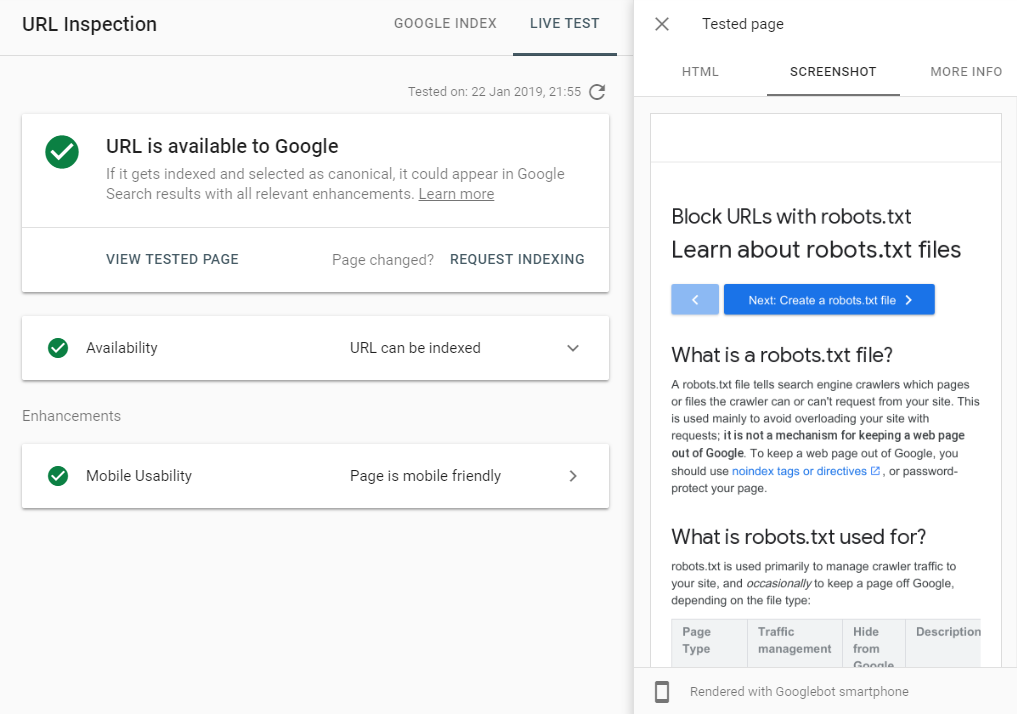

This is the Search Console account for ohgm.co.uk, accessing data that’s intentionally limited to the screamingfrog.co.uk Search Console account. Here’s another:

Method

The method is simple:

- Have a Search Console account for a website you control.

- Set up a redirect to the desired URL on a property you don’t control.

- Inspect the redirected URL in Search Console.

- Test Live URL.

That’s it.

You don’t get to choose User-Agent like with fetch and render, but the old Fetch and Render feature has never allowed redirects to be rendered anyway:

URL Inspect uses the User-Agent that indexes the website, and in most cases this will be Googlebot Smartphone. The important takeaway is that we can render what we want using genuine Googlebot.

How To View More Than a Preview

The Rendered Source provided can be copied and pasted out (unlike some other Google tools), so even though the preview only provides a limited above the fold snapshot, you can still make use of it. To do this:

- Copy the HTML from the tested page.

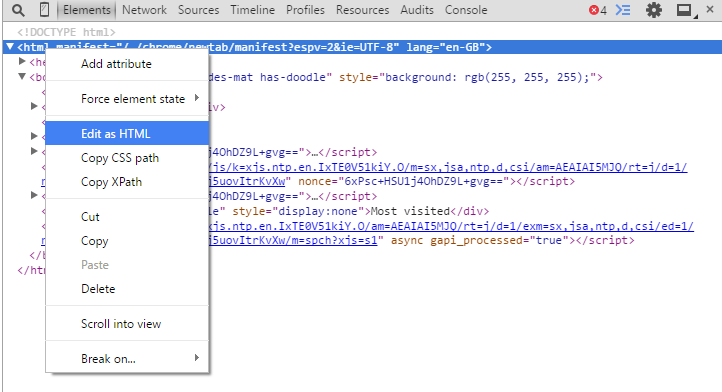

- Inspect Element in Chrome.

- Edit as HTML.

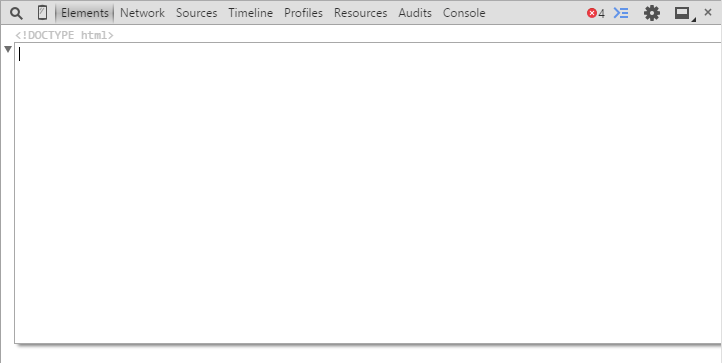

- Overwrite by pasting in what Googlebot’s rendered.

- Hit enter to view the full rendered webpage.

I’m doing this with Chrome 41 for that musty smelling authenticity (and to head-off some comments). This technique doesn’t just apply to other people’s websites, it can be used to note the rendering differences with your own (especially below the fold). It can be really useful.

Limitations

If the destination is blocked in robots.txt, this won’t work. There are probably other restrictions (especially if they decide to fix this), but I’m too excited by the possibilities to investigate them now.

Thankfully there don’t seem to be limits on the frequency with which URLs can be tested and retested (yet) so this can be automated. Perhaps more worryingly:

This can be used to submit other people’s content for indexing

Nothing exploitable there.

Other Ideas

This technique is potentially useful for sales, as it can give you information you shouldn’t yet have access to (e.g. by looking at the UA, you can see if a site is/isn’t under mobile first indexing). It’s a great way to quickly see if there are any blocked resource problems and to get started while waiting to be granted Search Console access.

Bypass Paywalls

Since it’s genuine Googlebot making the request from a genuine Googlebot IP address, you can use it to bypass most blocking. I can confirm this bypasses paywalls which allow Googlebot to crawl (e.g. most news websites).

Verify Cloaking

- If you suspect a competitor is cloaking, this will show you.

- If you want to check your cloak is working properly, this will show you.

I’m sure you’ll have some ideas for how to use this. Please share any devilish ones in the comments.

This only has slim advantages over using the mobile friendly testing tool, but I’ll take whatever I can get.

Be good.

I wish there was a level of knowledge between “public enough for me to know about it” and “private enough for Mueller not to know about it” and that this information was at that level.

I give it about 3 days before this feature is “deprecated”. Brilliant catch.

it’s been more than 3 years til now, and it’s still working fine :D

What will it do about urls on the other site which do not exist? Have you tested?

you will end up on a 404?? what sort of question is this?

Omg poor Google. Guess they don’t know about this yet. Once they notice this, it will be fixed in no time. Imagine having access to other people’s properties without their consent. This feature is not likely to be up for long

This is awesome! I am afraid it will be patched soon. So need to get the most out of it. Thanks so much for the brilliant catch.

This is a seriously more than amazing post! Thanks for showing it to us.

Awesome article. Thank you for sharing.

That Oranssi Pazuzu’s pic tho