Like most of my posts this is not worth implementing in any manner. This is unlimited budget SEO. Works in theory SEO. This is almost make-work.

There are no brakes on the marginal gains train.

Theory

We believe that broken links leak link equity. We also believe that pages provide a finite amount of link equity, and anything hitting a 404 is wasted rather than diverted to the live links.

The standard practice is to swoop in and suggest a resource that you have a vested interest in to replace the one that’s died. There is a small industry dedicated to doing just this. It works, but requires some resource.

If we instead get the broken links removed, the theory goes, we increase the value of all the links remaining on the page. You can increase the value of external links to your site by getting dead links on the pages that host them removed.

You could, of course, broken link-build here instead. You already have a link, and if you have the resources it’s going to be superior in equity terms. But the methodology is the same – and you’ve probably not gone out of your way before to broken linkbuild on pages you already have a link from.

Practice

- Get a list of URLs linking to your site.

- Extract and pull headers for every link on these pages.

- If appropriate, contact the pages hosting dead links or dead redirects suggesting removal.

To make our task as easy as possible we will need a list of broken links with the pages that spawned them.

Get Links

Majestic, Moz, Ahrefs, and Search Console. Crawl these for the live links. Whittle down to the ones you actually like – cross reference with your disavow file and make sure the links are only going to live pages on your site. I’d heavily suggest limiting this to followed URLs if you want any value out of this technique.

Extract Links and Crawl for Errors

We now need to take linksilike.txt and crawl each page, extracting every link as we go. We then need to get the server header responses from each of these links. Although writing a script to do this is probably the best way, the easiest way is to use tools we already have at our disposal. It’s overkill compared to getting the server header responses.

Unfortunately you can‘t use Screaming Frog in list mode to do this (correct me if I’m wrong). You need to crawl a depth one deeper than the list. To do this, first switch to list mode, then set the crawl depth limit to one (Configuration > Spider > Limits). If you do it the other way round you’ll end up writing misinformation on the internet.

Let’s pretend we have something against Screaming Frog, so we download Xenu – it’s what was used before Screaming Frog.

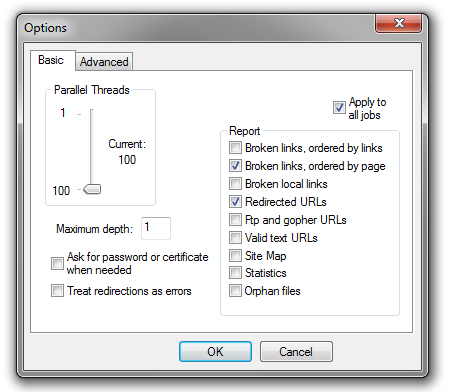

This is a heavier task than you might expect. A sample of the links from Search Console to this site yielded ~15k URLs to be checked. There is a 64bit Xenu beta available if you require more RAM. Here are the settings I’ve found most useful:

Check ‘Check External URLs’ under options.

File > Check URL list (test) to load in your URLs and start crawling.

Once complete, hit CTRL-R a few times to recrawl all the error pages (404’s will remain so, generic errors may be updated to more useful ones).

The main issue here is that by default Xenu isn’t going to give us the information we want in a nice TSV export. It does, however, offer us what we want in the standard html report – we get a list of URLs that link to us with a list of errors that stem from them. They typically take the following form:

http://01100111011001010110010101101011.co.uk/paid-links/ http://sim-o.me.uk/ \_____ error code: 410 (the resource is no longer available) http://icreatedtheuniverse/ \_____ error code: 12007 (no such host) http://www.carsoncontent.com/ \_____ error code: 12007 (no such host) http://www.seo-theory.com/ \_____ error code: 403 (forbidden request) http://www.halo18.com/ \_____ error code: 12007 (no such host)

http://seono.co.uk/2013/04/30/whats-the-worst-link-youve-ever-seen/ http://seono.co.uk/xmlrpc.php \_____ error code: 405 (method is not allowed) https://twitter.com/jonwalkerseo \_____ error code: 404 (not found) http://www.alessiomadeyski.com/who-the-fuck-is-he/ \_____ error code: 404 (not found) https://www.rbizsolutions.com.au/ \_____ error code: 12029 (no connection)

This is all we need to get started.

Decide if Appropriate

Although you can filter for a few things, you’re stuck doing this manually.

If the page links to both you and a competitor, updating would benefit you both, which we don’t want. One exception would be when the link to the competitor is generic, while the link to you is more specific – primarily by architectural depth. Another exception would be when your link has better placement on the page.

Helping out a competitor is not necessarily a bad thing because relative gains are what matters. Don’t simply crawl the list and exclude any that also link to competitors.

You don’t have to stick to instances where no blindingly obvious replacement resource exists. You’re no worse off if you do contact in these cases. It’s not inappropriate to use mailmerge here, either. As always, you’ll have more success crafting by hand:

Subject: Broken Link {URL}

Hey,

I was on the {topic of page} page ({URL}), and I saw that the link to {anchor topic} ({URL}) was broken.

Just thought I should let you know.Risks & Benefits

This is primarily of benefit to old websites. When dealing with ancient resources, there is always a small chance that someone will pull down the page rather than update it. You may have had this happen before. You are significantly worse off if this happens.

As a webmaster, this is probably a bit less suspect than regular broken linkbuilding, so there’s less potential for backlash. You can approach as an anonymous internet user.

Building a single link somewhere else is probably a better use of your time. Like I said, this is unlimited budget SEO, and there are better uses for an unlimited budget than messing around with this. Maybe.

The most marginal of gains… Lets scale this up and also check if the pages linking to us also link to hacked sites too. If as we say money is no object we could also check the headers for 301s to shady content.

Good ideas Chris,

If you modify Xenu’s output a little, then Scrapebox’s Malware and Phishing filter would be a good way to gather the data you need. People are very eager to not link to hacked sites.

You can read an older version of that idea on Giuseppe Pastore’s blog. The 301s to shady content would be pretty easy to gather with this method, too.

Solid insights mate

Thanks Frank.