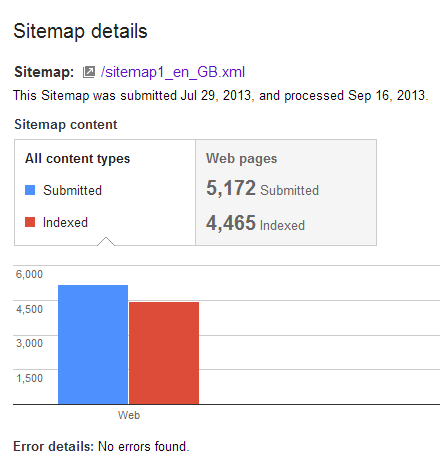

This shouldn’t be your first port of call for looking at indexation issues. Ideally, the site should have logically separated sitemaps to make any indexation problems easier to spot (e.g. is it product pages that are suffering, or the subcategory pages?). The URLs in the sitemap should all return a 200 result, and (nearly always) include only pages you want indexed (nothing blocked in robots, or with restrictive meta directives etc). If we have something more esoteric causing the issues, then this post might help. In webmaster tools we get this familiar report –

Being from GOOG, it shows us how many URLs are indexed (and aren’t indexed), but not which URLs are in the index. This is useful for diagnosing at a high level, but can be decidedly unhelpful when your house is largely in order. This intentional unhelpfulness is frustrating to me, so I’ve tried a few methods to claw back these details. Scrapebox often gets used to check for which URLs linking to your site are indexed. Long story short, instead of running the Check Index function on your tier 2 and 3 links, run it on your sitemap instead. If you’re not sure how to do that, read on.

I recommend you start by adjusting the number of connections you’ll be making to one. Google really doesn’t like being scraped in this way. Go to:

Settings > Adjust Maximum Connections > Index Checker

I typically set this number very low with a large set of rotating proxies. This gives the best results for our purposes (if you’re seeing any messages about your proxies being banned, you’re going to hard, too fast). For small sitemaps (sub 500 entries) you can afford to go a little faster, since the proxies won’t be as strained for as long.

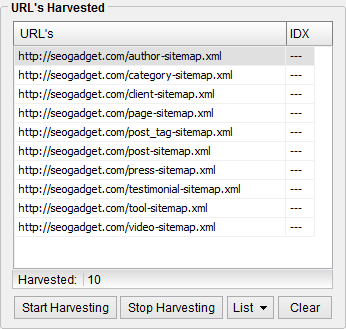

First, getting the links out of the sitemap can be a bit of a pain. Use the Sitemap Scraper add-on to make this a bit faster. First, copy the sitemap URLs and paste them into the URL harvester:

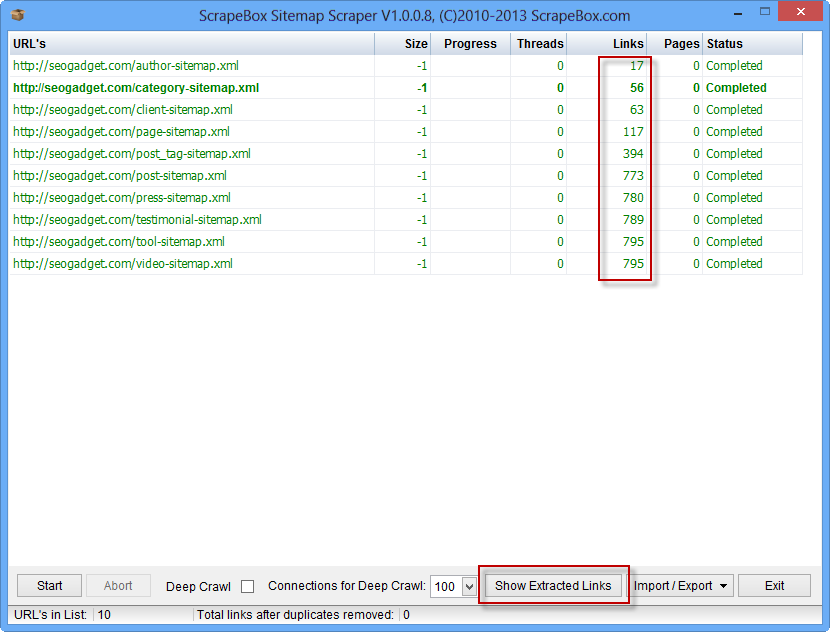

Then, open Addons > Sitemap Scraper and import the sitemap files from the Scrapebox Harvester. Click “Start”, and you’ll have link numbers for your sitemap/s:

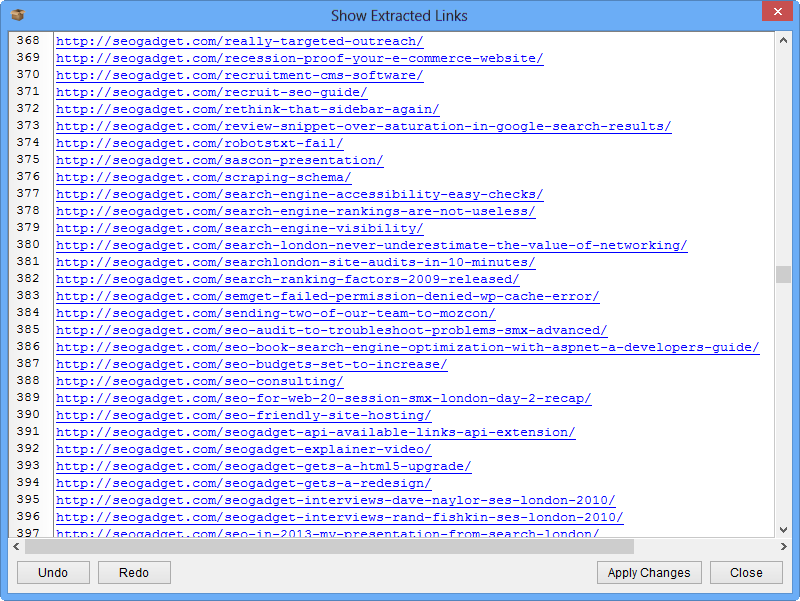

After it has run, click “Show Extracted Links”.

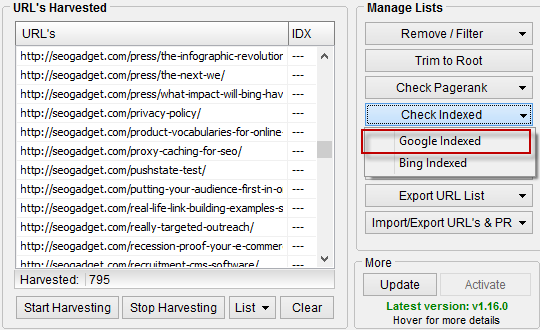

Copy and paste this list back into the URL harvester. Provided you are set up with proxies and very few connections you can use the Check Google Indexed feature under Check Indexed on the right hand side to start the process:

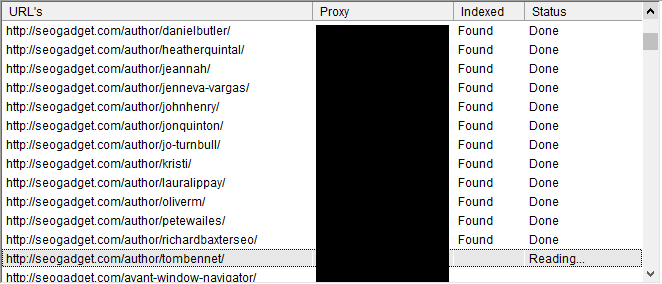

Once it is running, it should look something like this:

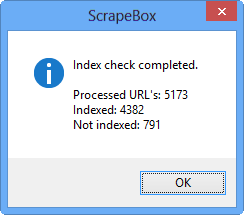

Once it’s finished checking the index status for each URL, this window will pop up to give you an overview (much like the original Google report):

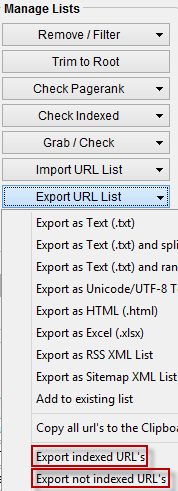

If you compare it to our numbers at the top you’ll see we’re doing well, with all the false positives on the right side of the line (the “indexed” report is completely accurate, while the “not indexed” report has small room for error). You can now export the indexed and not indexed URLs into a delimited .txt file by using the Export Indexed URL’s and Export not indexed URL’s functions in the Manage Lists menu:

From here you can use whatever spreadsheet program you like to break this out and start your analysis. I’d recommend comparing this to other data sources (crawl data, log files) to look for the non-obvious patterns in the data that may explain why the pages aren’t being indexed. Hopefully it should be fairly obvious what’s causing the indexation woes for these pages, and how it can be solved. It’s worth noting that I’ve only tried this on sitemaps of around 6000 entries without any errors. Your mileage may vary on larger sites…

Buy Backlinks – see here for more details (I’m a big believer in “don’t ask don’t get”).

In case you’re interested, Scrapebox uses info:URL to check indexation in Google, and link:URL to check Bing. Scrapebox support are very helpful.